I don’t like talking to robots

I have an Android phone, but I’ve disabled the voice commands. I’ve never owned an Alexa. I’m not sure what Siri does, exactly, because I’ve managed to get through life without it finding me a playlist. So when AI coding tools became hard to ignore, I came around slowly. For a while, I did what some call “one-shotting”: working as I always had, occasionally delegating a chunk of work to AI, then waiting to see what came back. It’s a really cool approach for people who like gambling.

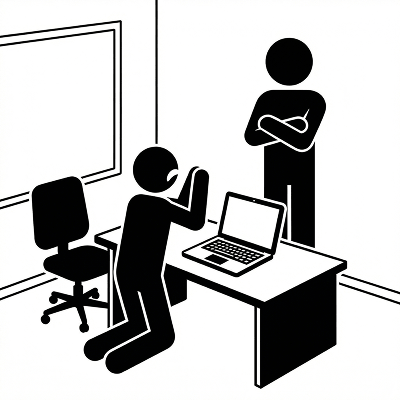

For me, part of the friction was the chat interface itself. The idea of having a conversation with the model felt foolish. I build things. I don’t negotiate with text boxes.

But spec-driven development doesn't work that way. You define the problem precisely, tell the model which files to consider, describe the desired outcome, go back and forth chatting about the plan before AI generates a line of code. Today's AI models are fast at coding but still dangerously inconsistent at high-level decision-making. When you work with AI, your job is to turn your design judgment into a written spec, so you can give AI a list of low-decision steps that match what you would do, if you were going to type the code yourself. To get that spec right, you have to negotiate.

I’ve learned this over the past six months. And I’ve become quite good at it. I rarely/never write code by hand, yet I’m delivering more features than I’ve ever delivered before. And since I’m not deferring software design to the model, I’m enforcing the same quality standards I’ve learned to uphold in my 25-year career building digital products.

What I didn’t expect was how hard this would be to teach.

Am I Picasso yet?

There is a notion (among senior management?) that LLM tools will increase all engineers’ productivity. In practice, I have not seen this. What I see is adaptable, experienced engineers achieving multiples of what they used to produce, while others are producing the same or less.

This is not a good situation for people starting out. AI coding tools appear simple because their interfaces are just text boxes. But you don’t become Picasso by arbitrarily smearing paint on a blank canvas.

Parallel implementation syndrome

I care about junior engineers for many reasons, not the least of which is that they’re my favorite people to work with! Their open-mindedness hasn’t yet atrophied, and they help me see things differently. Recently, I went on an AI learning journey with a young engineer who is smart, enthusiastic about AI tools, eager to jump in, and not at all hesitant to talk to the robot.

And yet, somehow it wasn’t working.

The first sign was the authentication system. She needed to add OAuth to an existing service, and she prompted the model to build the entire auth flow in one giant spec. Login, token refresh, session management, role-based access, and the logout flow. Everything. The model happily obliged. It produced several hundred lines of code that looked complete with 100% code coverage and passing tests.

But when I reviewed it, the problems were structural. The model had created a new session store instead of using the one we already had. It introduced a token refresh pattern that conflicted with our API gateway. The role-based access logic duplicated business rules that already lived in a shared middleware. Technically, none of this was wrong—it worked. Architecturally, all of it was.

I sat down with her and asked a simple question: “Before you prompted this, did you know how we handle sessions today?” She paused. “Not exactly.” That was the gap. She hadn’t mapped the territory before asking the model to build on it.

The next attempt went differently, but not by enough. She asked the model to modify the login flow to support a new provider. This time, she was scoping smaller, which was good. But she described the change in terms of what she wanted the UI to do, not in terms of what the existing code already handled. The model, having no reason to know about our existing provider abstraction, wrote a parallel implementation from scratch. Same outcome: working code, wrong architecture.

We talked through what had happened. I told her to think of the model the way she’d think of a new contractor on the team. Talented, fast, zero experience with our codebase. You wouldn’t hand a contractor this project and walk away. You’d say: here’s our session store, here’s the middleware, here’s the pattern for adding a new provider. Fit your work into this.

She got the concept. But the next few rounds showed me that the concept was the easy part. She’d get a single plan from the model and green-light it without pushing for alternatives. She saw the model’s willingness to make expansive code changes as being thorough; I saw it as needless risk. She didn’t yet have the instinct to say “no, that’s too much surface area for this change.”

An experienced engineer would have handled that same OAuth task in six or seven focused rounds, each one scoped to a piece of the system she already understood.

Soft skills are the new hard skills

This might sound like overhead that would erase the productivity gains of AI coding. But these aren’t new skills. Experienced engineers have been doing this in their heads all along. The difference is that spec-driven development externalizes software design planning of all tasks. The negotiation that used to happen internally, between you and your own judgment, now happens in a chat window.

Before you open the chat, you need to know what you want. Not the implementation, but the behavior, the constraints, the shape of the thing. You need to know your codebase well enough to tell the model where its work has to fit. When the model comes back with an answer, it'll probably run. That's not enough. You need to know whether it actually fits the system you already have.

Sometimes you’re recognizing that the model is right and you’re wrong, and you need the humility to evaluate that fairly.

Across the whole process, what separates people who use these tools well from people who use them fast is patience: the willingness to go back and forth five or six times instead of accepting something mediocre at round two. There’s also the skill of writing precisely about code without writing code, describing data flows and edge cases, and failure modes in plain language. And there’s taste, which is harder to define but easy to recognize: a sense of what good software feels like from the long-term maintenance perspective, not just whether it runs.

And then there’s the uncomfortable part. Most of these skills are pattern recognition built from years of getting things wrong. You recognize a bad architectural decision because you’ve lived with the consequences of one. You push back on the model’s first answer because you’ve shipped your first idea before and regretted it.

Junior engineers are being put into situations where they need to perform design negotiation at a high level, but there doesn’t appear to be a curriculum for that. It’s time to shift away from “LeetCode” to design negotiation, and this will be the subject of future posts in this series.

Addendum

Soft Skills for Negotiating a Spec with AI

During the Negotiation

-

Code reading fluency: Scan what the model produces for structural soundness, not just syntax. You're reading for fit, not for bugs. Why it matters: The model can generate valid code that doesn't belong in your system. You need to catch that fast.

-

Architectural taste: Recognize when a solution is technically valid but wrong for this system. Why it matters: The model doesn't know what "wrong for us" means. You do.

-

Creative steering: Find alternative framings when the model is stuck. Restate the problem, offer an analogy, constrain it differently. Why it matters: The model responds to how you frame things, and a better frame produces a better result.

-

Knowing when to take a position: The model presents options neutrally. You decide, and articulate why one approach is better for your context. Why it matters: That's engineering judgment, and it takes years to build.

-

Productive skepticism: Assume the model's first answer is a draft, not a solution. Not cynicism, but the habit of pressure-testing before accepting. Why it matters: First-pass outputs are seductive because they're fluent. Fluency is not correctness.

-

Knowing what you don't know: Recognize when the model might be right and you might be wrong. Why it matters: The negotiation goes both ways. Sometimes it surfaces an approach you hadn't considered, and you need the humility to evaluate it fairly.

Across the Whole Process

-

Patience: Go back and forth five or six times instead of accepting something mediocre at round two. Why it matters: This separates people who use the tool well from people who use it fast.

-

Technical communication: Write precisely about code without writing code. Describe data flows, state changes, edge cases, and failure modes in plain language. Why it matters: This is harder than most engineers think, and it's the primary interface between you and the model.

-

Holding complexity in your head: Track how the current decision interacts with three other parts of the system the model doesn't know about. Why it matters: The model has no persistent architectural context. You are the working memory.

-

Taste: A sense of what good software feels like from a user's perspective. Not just "does it work" but "is this the right experience, the right behavior, the right level of complexity." Why it matters: Without this, you'll accept solutions that are functional but wrong.

-

Knowing when to stop: Recognize when the spec is good enough to hand off to execution, and when you're over-refining. Why it matters: Diminishing returns are real. At some point, further negotiation costs more than it improves.